AI Data Annotation & Evaluation Support for AI Teams

Operational Data Systems & Quality-Critical Execution

AI-Powered Marketing & Growth Systems

Data Annotation & Computer Vision for Production AI

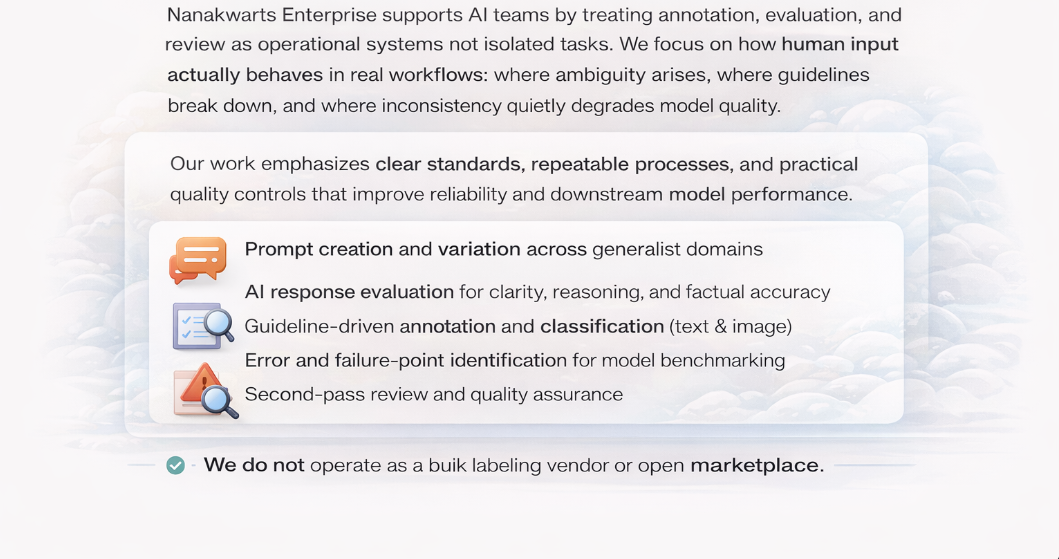

What we support

A systems-driven approach to AI data work

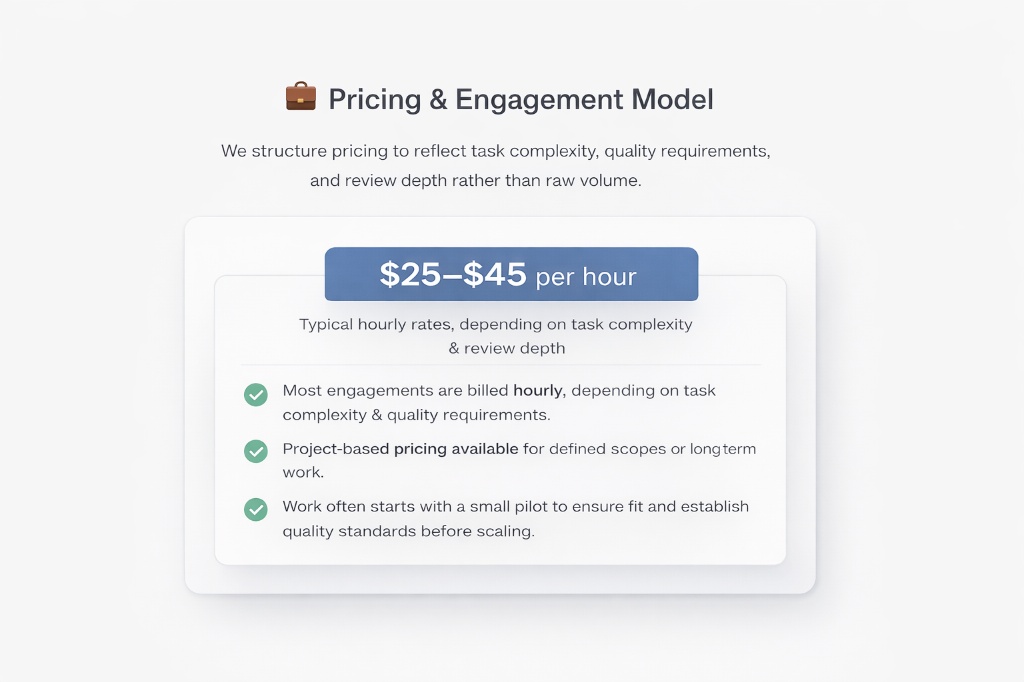

Pricing

Pricing & Engagement Model

Our Team

Distributed Execution & Quality Control

Nanakwarts operates with a small, tightly managed remote execution team trained specifically for guideline-driven review, annotation, and evaluation work.Team members are selected for attention to detail, written clarity, and comfort working with evolving instructions.

Training emphasizes consistency, rubric adherence, and clear escalation of ambiguity rather than speed or output volume.Work is structured with defined ownership, review layers, and quality checks to ensure outputs remain auditable and reliable as volume increases.

We scale deliberately and only when workflows and standards are stable, prioritizing accuracy and trust over rapid expansion.

How we work

A systems perspective, grounded in operations

Nanakwarts Enterprise works at the intersection of engineering thinking, operational research, and data-driven decision support. We focus on understanding workflows as they exist on the ground not as they are assumed to work and designing practical interventions that improve reliability, throughput, and decision-making. Much of our work begins with diagnostics: mapping processes, identifying constraints, and assessing where data, tools, or automation can realistically help and where they cannot.

DATA & ENGINEERING CONTEXT

Engineering-aware data preparation and validation

In operations-heavy environments, data is rarely clean or self-explanatory. Making it usable requires domain understanding, not just tooling. We support teams working with complex technical artifacts including LiDAR data, engineering drawings, P&IDs, process flows, and system schematics by structuring, validating, and reviewing datasets with an explicit understanding of the underlying systems. This work is typically part of early-stage pilots, applied research, or model readiness efforts where errors, ambiguity, or misinterpretation carry real operational risk.

WHAT WE DO IN PRACTICE

What we help with

1. Quality Review & Workflow Validation

We support AI and data teams by embedding into existing workflows to understand how systems function in practice and where reliability breaks down. Our work focuses on structured review and validation of outputs, technical artifacts, and process documentation, carried out on defined schedules and aligned with operational handoff points.

This includes identifying recurring failure patterns, ambiguity in specifications, and coordination gaps across operational, data, and organizational layers, then feeding those insights back into clearer standards and more reliable workflows. We are most effective in environments where accuracy, consistency, and contextual understanding matter more than speed or scale.

We typically engage as a small, execution-focused partner working alongside larger delivery or operations teams. In these contexts, we take ownership of clearly defined review, validation, or data preparation scopes, operating within established guidelines, tools, and handoff processes. This approach allows teams to pilot external support on bounded work, reduce coordination overhead, and maintain consistency, auditability, and accountability across quality-critical workflows.

2. Data foundations for complex systems

Structuring and validating operational data so it is reliable, auditable, and fit for downstream analysis or modeling — particularly in engineering and infrastructure contexts where misinterpretation carries real operational risk.

Our work often involves reviewing and normalizing data derived from technical sources such as engineering drawings, schematics, LiDAR outputs, process documentation, or system logs. We focus on ensuring that data reflects the reality of the underlying system, not just the format it is captured in.

We support teams through ongoing data review and quality control processes, helping establish clearer standards, resolve ambiguity, and maintain consistency across datasets as systems evolve over time.

3. Selective automation & decision support

Designing narrowly scoped tools, scripts, or decision-support mechanisms that reduce manual effort or improve operational decisions — implemented only where value is clear, measurable, and sustained.

This work typically follows from earlier diagnostics or data foundation efforts and is focused on supporting existing workflows rather than replacing them. Examples include lightweight automation for recurring checks, structured decision aids, or internal tools that improve consistency, traceability, or turnaround time.

We prioritise simplicity, transparency, and operational fit, avoiding large or speculative builds and scaling only when results justify it.

Mission

Our Mission: Make Complex Operations Reliable and Practical

Our approach combines systems thinking, applied analysis, and selective automation deployed only where value is clear and sustained.

FAQ

Frequently Asked Questions

What type of companies do you work with?

We work with operations-heavy businesses — logistics, FMCG, construction, retail, and data-driven teams — that deal with manual processes, fragmented data, or early-stage AI initiatives.If your team spends too much time cleaning data, coordinating workflows, or validating outputs, we’re likely a good fit.

Do you build full AI products or internal systems?

Primarily internal systems. We focus on data operations, automation, and AI enablement that support real business workflows — not flashy demos or overengineered products.Where it makes sense, we also support pilot AI features that can later scale.

How do you start an engagement?

We start small and practical. Most engagements begin with a short discovery phase focused on one clearly defined problem. From there, we implement a pilot or proof-of-value solution. This allows teams to see measurable results before committing further or scaling.

Do you provide data annotation and QA services?

Yes — as part of building reliable AI systems. We provide structured data annotation and quality assurance workflows that support real operational use cases, not generic labelling at scale. This includes dataset preparation, validation, and review processes designed to improve model performance and trustworthiness. work is scoped carefully and paired with QA checks so outputs are consistent, auditable, and ready for downstream analytics or model training.

Are you a good fit for early-stage teams or pilots?

Absolutely.

In fact, we’re often best suited for early-stage or first-time AI adopters who want to prove value before scaling. We prioritize clarity, measurable impact, and operational usefulness over hype.

How do you price your work?

Pricing depends on scope and complexity. We typically work on fixed-scope pilots or short-term engagements with clearly defined deliverables. This keeps costs predictable and aligned with results.

Do you replace internal teams?

No.

We work alongside internal teams — enabling them, improving workflows, and transferring knowledge where appropriate. Our goal is to reduce manual work, not replace people.